Early History of Radiology (and Radon)

As the 19th century drew to a close, the world ushered in a golden age of innovation that would introduce us to stainless steel, diesel engines, and plastic. It was during this era that the emerging field of radiology was born. Wilhelm Röntgen discovered X-rays in 1895, Henri Becquerel observed uranium emitting its own “rays” in 1896, and Marie Curie coined the term “radiation” shortly thereafter in 1898. This new concept of radiation would both challenge and advance our understanding of physics, the repercussions of which would not be fully realized until nearly fifty years later.

Radon was discovered in 1899 at McGill University in Canada, with the official credit usually going to Ernest Rutherford, Robert Owens, and Harriet Brooks. Rutherford and his team were actually studying the thorium decay chain, and therefore had discovered an isotope of radon that would eventually become known as thoron (220Rn). Other radiological pioneers around the world, such as Marie Curie and Friedrich Dorn, were also conducting their own experiments with uranium and radium.

At first, these scientists focused on the physical properties of radioactive elements. They calculated masses, densities, and melting points with surprising accuracy, especially given their limited instrumentation. They identified new isotopes, argued over names, and began conceiving practical – and profitable – applications. They tended to be reckless and gleeful with their discoveries in these early days, and it didn’t take long to commercialize the burgeoning field of radiology.

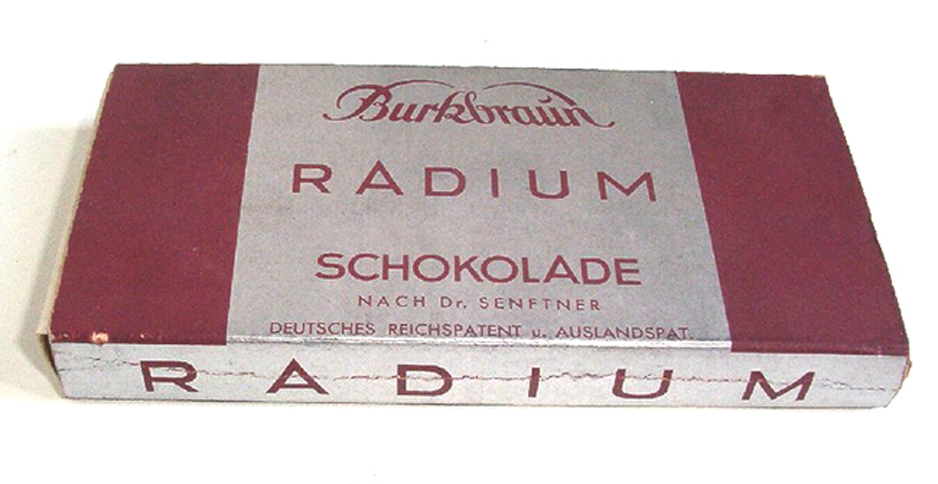

Toothpaste, cosmetics, drinking water, and even chocolate soon had “radium-infused” versions available to buy. Medical patients were exposed to ionizing radiation for hours at a time, and even shoe stores would offer customers complimentary X-ray scans to match the bones in their feet with “just the right footwear.”

(Yep, that's German for “Radium Chocolate.” It was manufactured between 1931 and 1936, and would later be lovingly referred to as “suicide chocolate” by British chemists.)

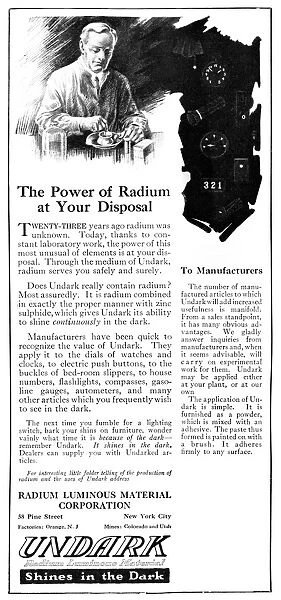

In retrospect, it’s easy to condemn our foolhardiness with these novel radioactive elements. But as the world had never before witnessed such rapid transformation, this daredevil enthusiasm can perhaps be understood – and forgiven – in the broader context of the times. Electricity, automobiles, and telephones had sparked a revolution in lifestyles and landscapes; fluorescent lights illuminated cities, hydrogen airships lumbered through the skies, and glow-in-the-dark clocks were nestled next to beds. The Modern Age had arrived, and humankind was intoxicated by its glitter and glamour.

The warning signs of exposure to ionizing radiation were often observed, but it proved difficult to pinpoint the cause. The initial “dose” didn’t seem to cause any harm, and the mechanisms of cellular damage from radiation were still unknown. Many scientists and inventors – including Nikola Tesla and Thomas Edison – experienced eye irritation and burns from their experiments, but such symptoms would often take weeks to manifest. And although Marie Curie urged caution when handling radioactive materials, many scientists claimed there were no ill effects at all. As a result, many of these radiological pioneers, including Marie Curie, would die from causes later attributed to long-term radiation exposure.

Radium Undark.svg

Although anecdotal evidence mounted over the years (such as the deaths of the Radium Girls in the 1920s), it would not be until 1945 when the world fully awakened to the cataclysmic and destructive capabilities of ionizing radiation.

Approximately five years later, in 1950, radon’s presence in indoor air was first documented. Although studies were conducted over the next few decades, very little was accomplished in regards to public awareness or exposure prevention. However, this would change after a fateful event in 1984 brought recognition to the potential dangers of indoor radon concentrations. While working at a newly built nuclear power plant in Pennsylvania, a construction engineer triggered radiation warning alarms. Interestingly enough, these alarms occurred when the engineer was arriving at work – and before there was any radioactive material at the power plant.

The resulting investigation would reveal the engineer’s house contained indoor concentrations above 2500 picocuries per liter (pCi/L), which catapulted radon to national awareness. Two years after this incident, the Environmental Protection Agency prescribed the recommended “action limit” at 4 pCi/L. This would mark the beginning of the radon testing and mitigation industry in the United States.

Author Biography:

Lorin Stieff is the Vice President of Rad Elec, where he assists with software development and technical support. Before writing this blog post, he had no idea that radium chocolate actually existed.